The Last Usability Benchmarking Template you’ll need

By: Michael Oliver

After five years of running UX research programs at tech startups, I’ve watched countless teams chase the wrong metrics, celebrate false victories, and spend too much time and energy only to miss critical insights hiding in plain sight. So, I took everything I liked and left our what I didn’t when making this Usability Benchmarking Template. It has automatic data analysis, includes standardized error tags and UX measurements, and can easily be customized and expanded, and it’s free.

Why you should use this template

After using various templates in the past, I believe this template is best to help you:

- Save time: With previous templates, it took me between 4 and 8 hours per participant to collect, synthesize, and summarize data. With this template, the results are automatic, so a 1-hour session with each participant is all you need after you set it up.

- Make moderating easy: This template is designed to help moderate a session while also collecting data, so you don’t need a note-taker. It also includes a practice sheet to ensure you’re comfortable with the material.

- Improve internal consistency: If an entire team uses the same template and UX measurements, then it can become a standardized usability benchmarking system rather than a one-off test.

- Make Results Accessible: An easy-to-read results tab and matching report template ensures researchers and stakeholders have the quantitative and qualitative information they need to evaluate the experience.

- Customize: You can customize the moderation script, introduction questions (free-text), jobs you test, job scenarios, job steps, success criteria, error tags, post-job questions (5pt Agree Likert Scale), and exit questions (5pt Agree Likert Scale). (It’s currently set up for UMUX-like’s Usefulness and Ease of Use questions and grading scale, but you can change them or add more.)

Preparation

I use the JTBD Framework to help set up the benchmarking test. If you’re not familiar with the framework, I suggest this article for a 5 minute read or the first three chapters of Jim Kalbach’s JTBD Playbook for a deeper understanding. You don’t need to be an expert, but the gist of it is that you have a [Job Performer] who is in a specific [Circumstance], and they’re doing the [Job] that we’re going to evaluate.

Set it all up (30–60 min)

First, copy the template to your Google Drive and re-label it. It contains multiple interconnected sheets, so avoid deleting any tabs — even if they seem unnecessary at first. The template includes some scripts that will be flagged when copying, but don’t be intimated!

Then, go to the Setup tab and follow the steps under the “Directions” column. (Please read the directions!) I created an example benchmarking sheet for the content in this blog.

Steps 1–4: Product and Environment Info (5 min)

Steps 1–3 involve adding your product’s name, the test environment login information (or however else your participants will interact with your product, service, or prototype), and the introduction questions. The script is reusable so there shouldn’t be much to change besides the test environment or prototype information. I recommend using an environment as close to the real thing as possible to get accurate results.

Step 4 is the test scenario that covers all of the jobs you’ll be testing. This ‘big job statement’ should be at a higher level than the other tested jobs. For example, Maintain a code repository is a higher-level job compared to manage user access and controls.

Steps 5–8: Jobs, Job Scenarios, and Success Criteria (20–50 min)

I recommend doing the steps below while you have the test environment open so you can see what the real user experience looks like.

You can enter up to 10 jobs during Steps 5–8, though I strongly suggest limiting this to 7 for the sake of session length. Each job requires four components in the template:

Job statement (Step 5): The syntax should follow Verb + Object + Clarifier and describe what actions the participant will be doing, in the language they are used to.

Test Scenarios (Step 6): Write this carefully; it should provide ALL of the context a participant needs to complete their tasks.

Job Steps (Step 7): The template includes conditional formatting that allows up to 10 steps for each job. Enter steps sequentially, adding {Optional} when needed.

Success Criteria (Step 8): An ideal, objective, end-state in the product that is measurable and accomplishable based on the information in the Test Scenario. The participants’ ability to achieve all the success criteria will determine their completion status for each job, and therefore the overall usability grade for the test. Because of this, your success criteria should be supported by previous research.

For example; A completed intake form with name and zip code.

Review each step, but especially the test scenario (step 6) since you’ll be sharing that exact phrasing with the participant for each job. You may fine-tune the language during your practice sessions.

Step 9–11: Configuring Measurements (5 min)

Add any additional Error Tags (Step 9) you may want to use. There are over a dozen tags already included, and the sheet has a custom script that adds additional error tags from the Setup tab to the Tags Master tab. You can also add tags in the middle of a session, so don’t worry too much about it now.

Post-Job and Exit Questions (Steps 10 & 11) are questions at the end of each job and the end of the overall test, respectively. The template currently uses:

A modified version of the UMUX-Lite, sometimes called the UX-Lite, which includes Usefulness and Ease of Use measures, both on a 5 point agree-disagree scale. This is asked after each job, and after the overall test.

I also like to add a reverse coded version of the CES and an extra trust measure at the end of the test, but feel free to remove or modify these.

The template enables you to add up to three post-job questions and up to five exit questions, although all questions must be on a 5pt Agree-Disagree scale (without significantly changing the sheet).

Lastly, if you want to make moderating sessions as easy as possible, I recommend you go into each Participant - ## and hide any of the rows where the job areas are not filled with content from the Setup tab. (This was the one bit of automation I couldn’t fit in)

Moderating Sessions

There’s a Practice Session tab that mirros the Participant - ## tabs. I recommend conducting a practice session with a colleague who is less familiar with the product area, and then make any final adjustments to the language in the scenario or order of steps.

The script is everything in blue text

You say anything in blue outloud to the participant. You should also send the Job Scenarios to the participant over your video conference chat (e.g., Zoom chat) so they can read the scenarios at their own speed.

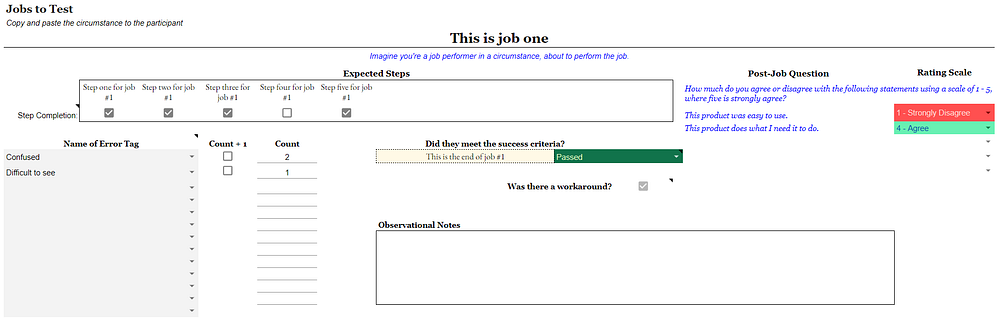

Collecting data

The sheet moves from left to right and top to bottom during moderation to help with data collection.

Step Completion. Use the checkbox under each step to mark whether the participant accomplished that step. Personally, I will allow the participant to ask a small question to clarify the goal and still ‘pass’ them, but anything more, and I fail them. If they do miss a step, I will tell the participant how to accomplish the task, leave that step unchecked, and continue to collect data on the rest of the experience.

Error Tags. If the participant deviates from the expected path, you can record their behavior using an error tag on the left side with the drop-down items. Most pre-loaded tags describe common UX problems and/or heuristics. You can type in a new tag and use it for future sessions if you’d like. Press the checkbox under the ‘Count + 1’ to add a count to each error tag. The list of tags is also on the far right of the first job test area so you can refresh your memory during moderation.

Job Completion Rate. Each job can end as either Pass, Recovery, or Fail. ‘Pass’ and ‘fail’ are self explanatory, and ‘recovery’ is when the moderator needs to provide a gentle prompt or the participant uses trial and error to complete the task successfully. You can change these definitions based on your organization’s preferred criteria though

Workaround. A workaround (True/False) is when the participant completes the job in an usual way. This data doesn’t go into the usability grade, but it can be helpful when painting the picture of what the user experienced.

Post-Job Questions. Currently set up for the two UMUX questions on a 5 point agreeability scale.

Observation Notes. A textbox for other notes on the participant’s experience.

Summarizing Data

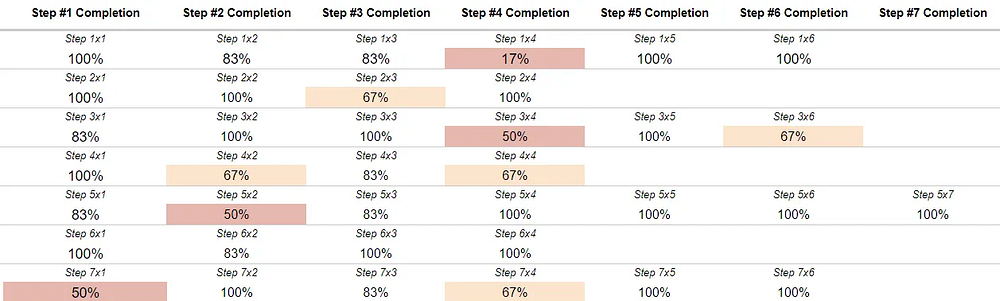

Thankfully, you don’t have to do anything to summarize the data. Just go to the Results tab to see the summarized data. There’s conditional formatting throughout the results tab to highlight anything under 75% with yellow text and anything under 60% with red text.

The bulk of the results tab will be the completion rate for each step of the jobs tested (see the image below).

Further to the right is the average error count per participant, overall job completion rate, UMUX score, and a numeric usability grade. You can see the Ease of Use and Usefulness question (and any other post-job question you added) in the hidden column next to the UMUX score. The numeric usability grade uses almost all of the data you previously collected in the following way;

Usability Grade = Average of (Completion Rate for Steps #1–10 + Overall Completion Rate + UMUX-Score ) — Average Error Count*1.25

Below the results for each step are the results from the post-test questions you asked the participant. If you add or edit any of the question in the setup tab, those changes will be reflected here.

Finally, the last data presented is the error tag breakdown across each job. This will help paint the story of the participants’ struggles during the test. You can also use this information to guide you in what types of quotes you may want to highlight in reporting, so stakeholders have both quantitative and qualitative information about the experience.

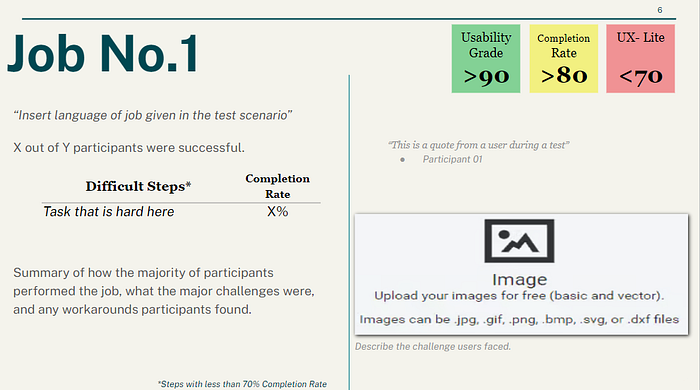

Sharing the data to stakeholders

The easiest way to explain the data is by following the users’ experience for each job you tested. I like to have the test environment open so I can go through the steps in each job and show what the most common types of errors participants had, what the completion rate for each step and the overall job was, and what the UMUX scores were. If the UMUX was low, then I will report if either the Ease of Use or Usefulness question was the primary cause.

You can use this report template that aligns with the template and includes some helpful color coding.